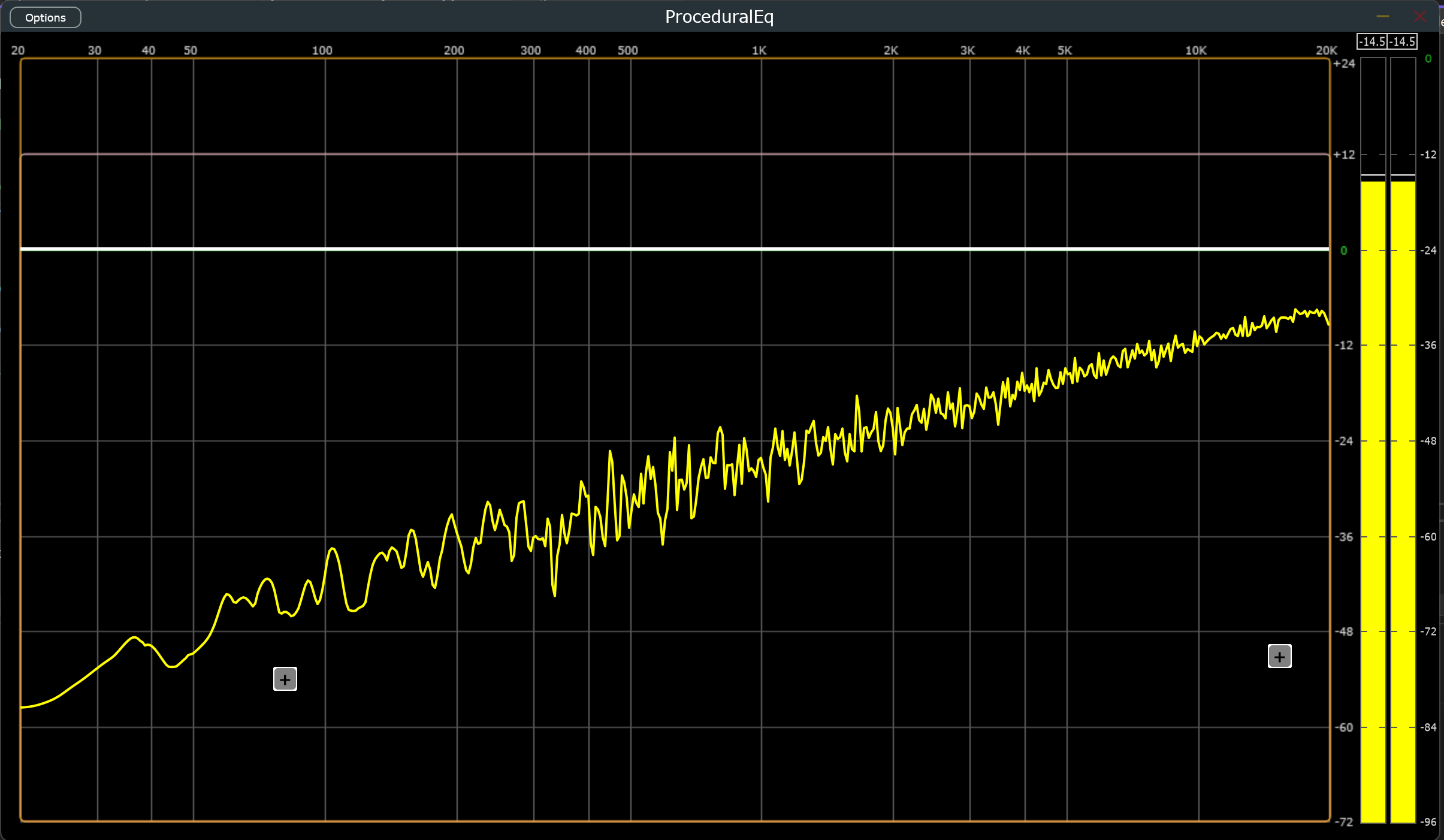

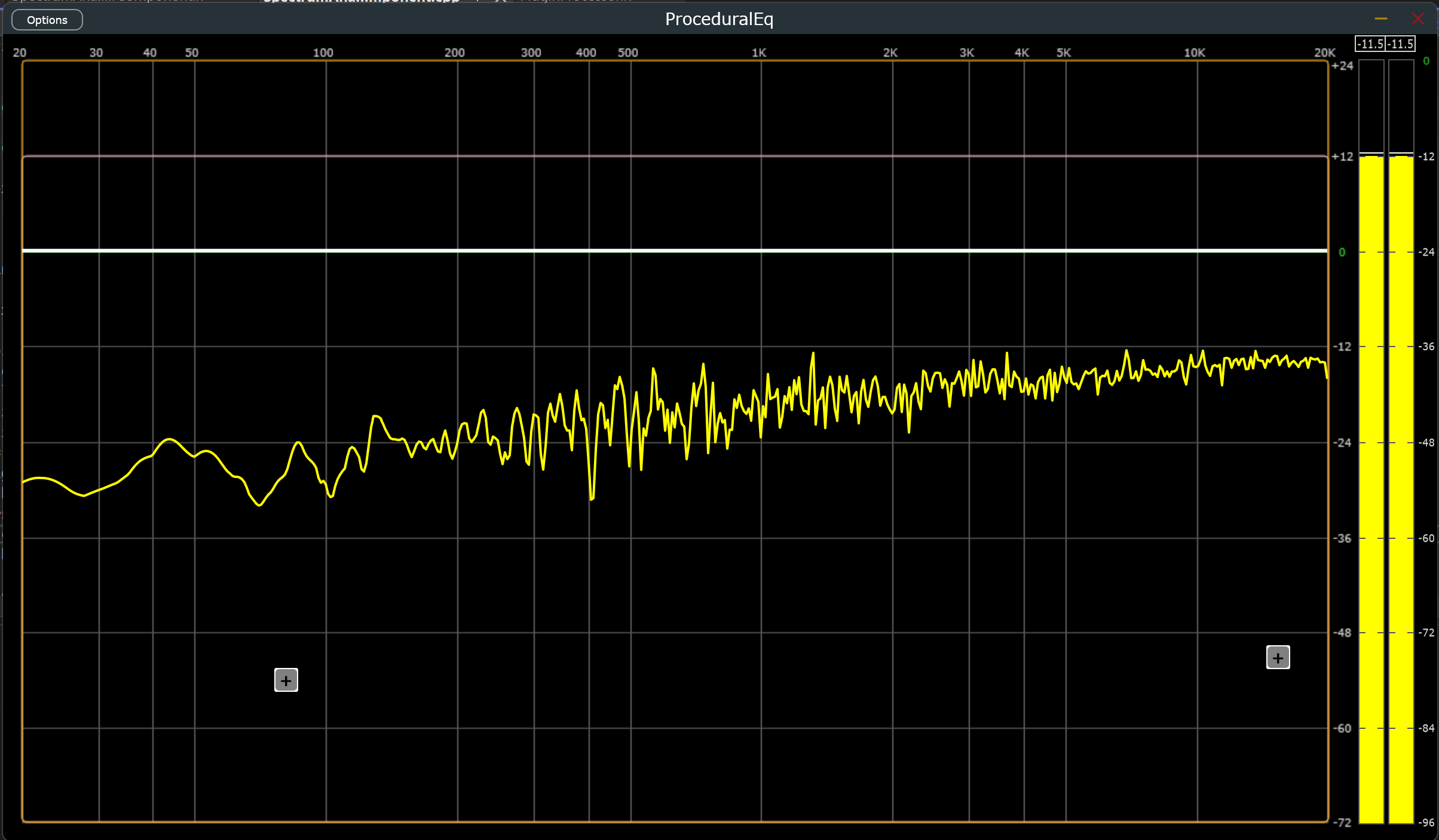

2 of my 3 big ticket items are done from last week, with a lot of progress on the 3rd. Zipper noise and parameter change clicks are now entirely removed, which is huge. The spectrum analyzer still shows minor inconsistencies in the low end, but it's noticeably more stable, a bit faster, and much prettier than it was a week ago.

To follow up from last week's issues:

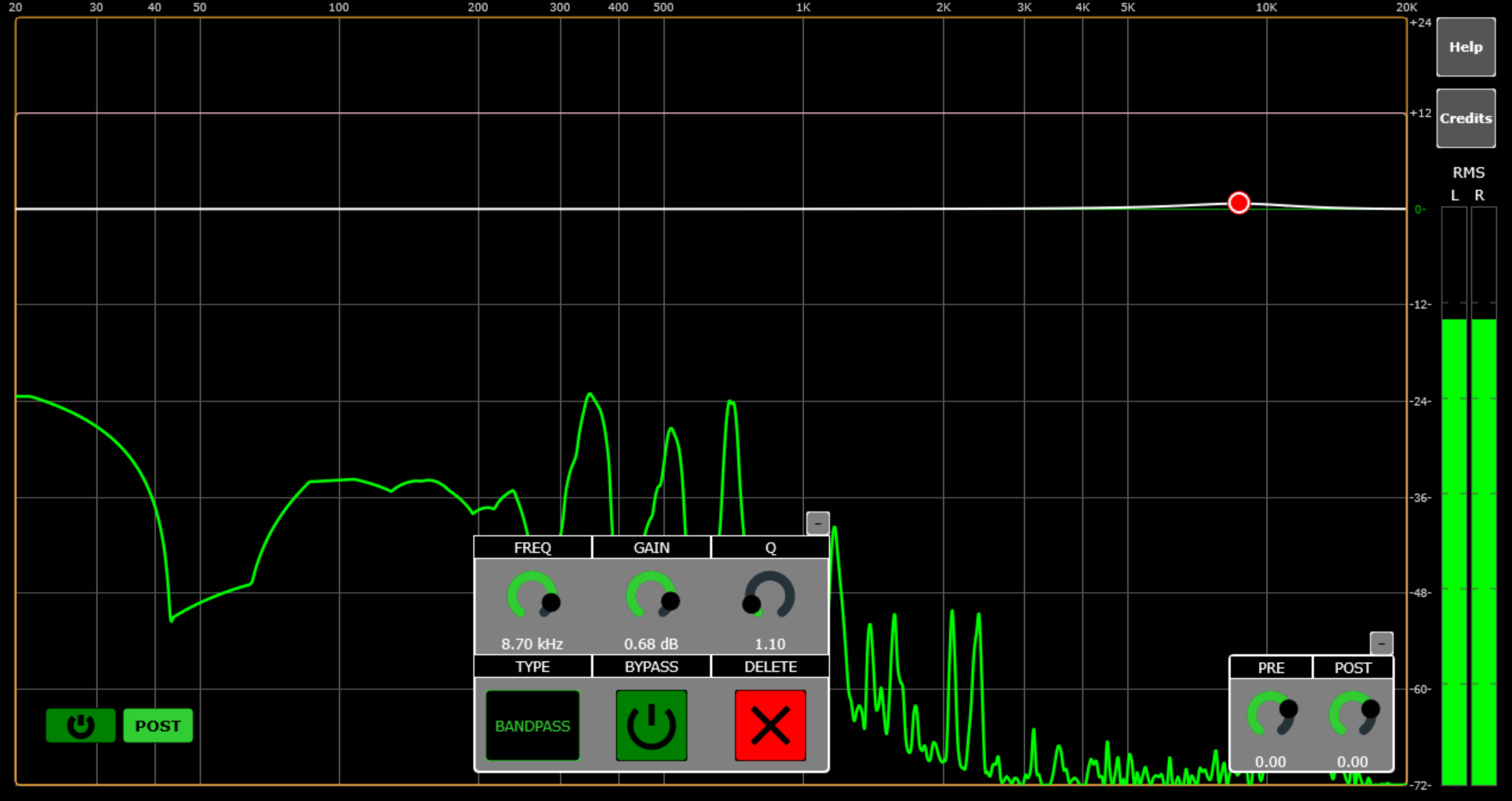

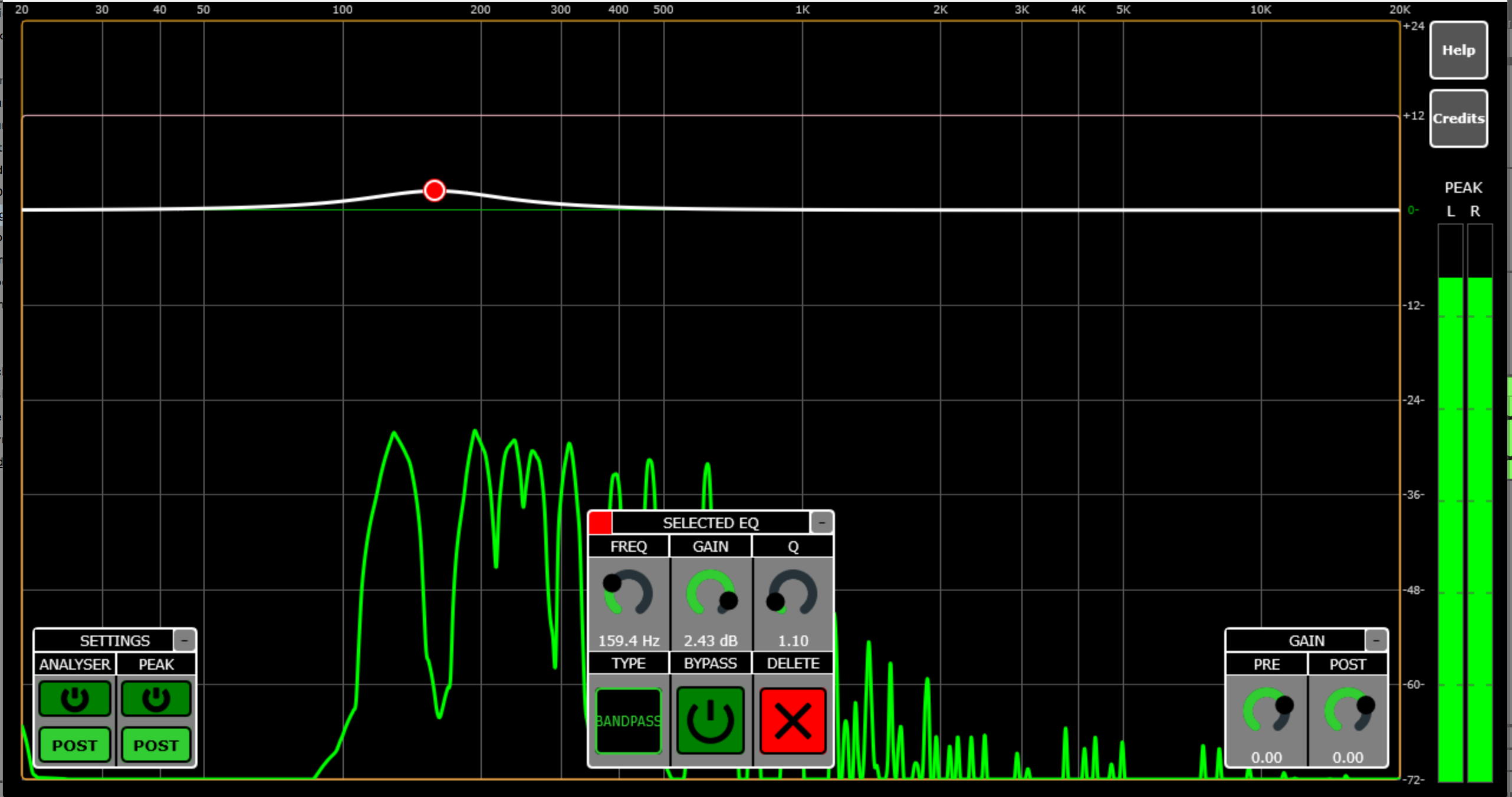

- Response curve rendering no longer exhibits issues associated with high slopes. This turned out not to be a graphics problem at all. The curve was just too honest, exposing some inherent shortcomings of high-Q digital filters at very low frequencies.

- Spectral analysis still shows occasional inconsistencies after extensive testing, but far fewer than before. Along the way, the component received major optimizations and quality improvements.

- Noise from coefficient updates is completely eliminated!!! This was the big one.

There was a story I used to hear about Edison making the lightbulb, and how he failed 1000 times and said he didn't regret it because he learned 1000 ways not to make a lightbulb. That's how I feel after finally cracking IIR coefficient swapping without zipper noise or clicks. I have tried so many things with wildly varying degrees of success, but the solution is here now and I'm super excited about it.

If you prowl enough DSP forums, you'll see a few common recommendations for this problem: switch to a state variable filter(SVF), smooth your parameter changes, or crossfade between double-buffered filters. You'll see a few other suggestions that will also most likely be bad, but these are the 3 most common.

I tried all of these and many other solutions while trying to make the JUCE classes work. Each of these changes generally require tons of changes throughout, and due to having so many filter types, often cascade down to affect that complexity as well. The SVF decision is clear. It's substantially less versatile than IIR filters. Having no noise is great, but with only 3 filter types, it's not feasible. Parameter smoothing alone doesn't solve the core issue: coefficient swaps still cause recursive state variables to temporarily blow up without a reset, producing audible clicks. Lastly, the crossfade suggestion. Super expensive computationally, and takes up double space for all filter stages. It's brutal and will not scale properly volume-wise without some even more extreme math to account for loudness changes. Linear interpolation and dB don't play well if you want to avoid a noticeable dip in volume during the crossfade. So then on top of all the other expenses multiplicative interpolation is needed and may need to be carried over across process blocks, and even when you get all of that it still has zipper noise, but just less of it. I knew there had to be a better way.

The True Zipper Noise Fix:

The hypothesis I gathered was this. Rather than lerp the parameters, lerp the coefficients themselves, because the large jumps in coefficients were what caused the zipper noise. This wound up being right, but also revealed a second issue: recursive state variables struggling to re-sync under large per-sample coefficient changes.

This ramp time issue with the Smoothed Value coefficients was super interesting. At anything over 20 ms, the state variables of the filters have trouble handling the changes per sample and would impulse-pop at low frequencies. At anything below 6 ms, the zipper noise comes back. At around 10 ms, both problems disappeared: smooth sweeps, no clicks, and no zipper noise across all parameter changes!

Achieving this required rebuilding JUCE's Coefficients, Filter, and ProcessorDuplicator concepts from the ground up, since their implementations are tightly coupled. While doing so, I stripped out templated features I didn't need and purpose-built everything for this EQ. The new system uses slightly more memory, but performance is comparable across the board, and in some cases faster, even with adding the internal smoothing, bypass handling, and coefficient replacement logic.

I can't stress how happy I am with these custom classes. They handle so much that they make my processor's logic so much simpler too, especially after housing both the coeffs and filter in a struct that covers all of the initialization. This was easily the trickiest problem to solve in this, and this solution was super satisfying to reach. The rest from here is just validation, polish, and cleanup. As far as functionality is concerned, these filters are officially of professional quality!

Completed This Week

Handrolled IIR Filter System(SmoothFilter, SmoothCoeffs, & EqStage in /Utils/Processing.h):

- Completely rewrote Coefficients & Filter classes to implement per-sample linear interpolation of coefficients on all parameter changes

- Entirely worked in mono/stereo compatibility with simpler and faster logic than the Juce Processor Duplicator class

- Maintained thread-safe reads for GUI while adhering to JUCE safety standards

- Reworked all parameter updates, filter initialization, prepare-to-play logic, and process block logic to accommodate custom classes

- Created parent struct to hold both classes and streamline initialization

- Successfully eliminated all zipper noise and clicks from parameter changes!!!

Response Curve Component(ResponseCurveComponent.h/.cpp in /Components/Visualization/):

- Implemented ideal analog equation from parameters for more accurate magnitude readings (Peak and Notch filters only)

- Squashed lingering bugs in curve rendering

- Further optimized repaint calls by caching the drawn path

- Aligned curve frame drawing behavior with spectrum drawing for consistency

- Reduced lag between response curve and button interactions to acceptable levels

Code Organization & Documentation:

- Added comprehensive documentation throughout codebase

- Separated all components into individual files

- Organized components into a logical directory structure